What is automatic speech recognition (ASR)?

Automatic speech recognition is a process for automatically converting speech into text. ASR technologies use machine learning methods to analyse, process and output speech patterns as text. From generating meeting transcriptions and subtitles to virtual voice assistants, automatic speech recognition is suitable for a wide range of use cases.

What does automatic speech recognition mean?

Automatic speech recognition (ASR) is a subfield of computer science and computational linguistics focused on developing methods that automatically translate spoken language into a machine-readable form. When the output is in text form, it’s also referred to as Speech-to-Text (STT). ASR methods are based on statistical models and complex algorithms.

The accuracy of an ASR system is measured by the Word Error Rate (WER), which reflects the ratio of errors—such as omitted, added and incorrectly recognised words—to the total number of spoken words. The lower the WER, the higher the accuracy of the automatic speech recognition. For example, if the word error rate is 10 percent, the transcript has an accuracy of 90 percent.

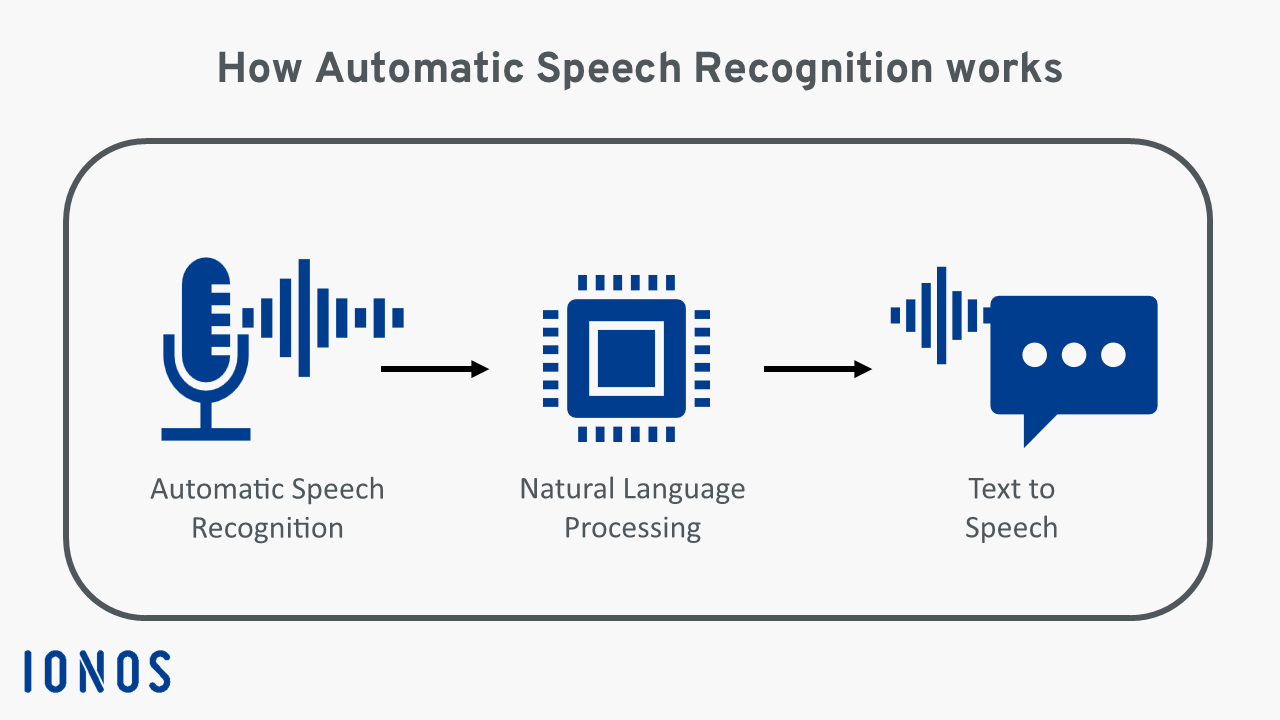

How does automatic speech recognition work?

Automatic speech recognition consists of multiple consecutive steps that seamlessly integrate. Below we outline each phase:

- Capturing speech (automatic speech recognition): The system captures spoken language through a microphone or other audio source.

- Processing speech (natural language processing): First, the audio recording is cleaned of background noise. Then, an algorithm analyses the phonetic and phonemic characteristics of the language. Next, the captured features are compared to pre-trained models to identify individual words.

- Generating text (speech to text): In the final step, the system converts the recognised sounds into text.

Comparing ASR algorithms — Hybrid approach vs deep learning

There are generally two main approaches to automatic speech recognition. In the past, traditional hybrid approaches like stochastic Hidden Markov models were primarily used. Recently, however, deep learning technologies have been increasingly employed, as the precision of traditional models has plateaued.

Traditional hybrid approach

Traditional models require force-aligned data, meaning they use the text transcription of an audio speech segment to determine where specific words occur. The traditional hybrid approach combines a lexicon model, an acoustic model and a language model to transcribe speech:

- The lexicon model defines the phonetic pronunciation of words. A separate data or phoneme set must be created for each language.

- The acoustic model focuses on modelling the acoustic patterns of the language. Using force-aligned data, it predicts which sound or phoneme corresponds to different segments of speech.

- The language model learns which word sequences are most common in a language, aiming to predict the words most likely to come next in a given sequence.

The main drawback of the hybrid approach is the difficulty in increasing the accuracy of speech recognition using this method. Additionally, training three separate models is very time- and cost-intensive. However, due to the extensive knowledge available on how to create a robust model using this approach, many companies still go for this option.

Deep learning with end-to-end processes

End-to-end systems can directly transcribe a sequence of acoustic input features. The algorithm learns how to convert spoken words using a large amount of paired data. The data pairs are comprised of an audio file containing a spoken sentence and the corresponding transcription of the sentence.

Deep learning architectures such as CTC, LAS and RNNT can be trained to deliver precise results even without using force-aligned data, lexicon models or language models. Many deep learning systems are still paired with a language model though, as it can further enhance transcription accuracy.

In our article ‘Deep learning vs machine learning: What are the differences?’, you can get a better understanding of how these two concepts differ from each other.

The end-to-end approach for automatic speech recognition offers greater accuracy than traditional models. These ASR systems are also easier to train and require less human labour.

What are the main applications for automatic speech recognition?

Thanks to advances in machine learning, ASR technologies are becoming increasingly accurate and more powerful. Automatic speech recognition can be used across various industries to increase efficiency, improve customer satisfaction and/or boost ROI. The most important areas of application include:

- Telecommunications: Contact centres use ASR technologies to transcribe and analyse customer conversations. Accurate transcriptions are also needed for call tracking and for phone solutions implemented via cloud servers.

- Video platforms: The creation of real-time subtitles on video platforms has now become an industry standard. Automatic speech recognition is also helpful for content categorisation.

- Media monitoring: ASR APIs make it possible to analyse TV shows, podcasts, radio broadcasts and other types of media for brand or topic mentions.

- Video conferencing: Meeting solutions like Zoom, Microsoft Teams and Google Meet rely on accurate transcriptions and content analysis to generate key insights and guide relevant actions. Automatic speech recognition can also provide live subtitles for video conferences.

- Voice assistants: Virtual assistants like Amazon Alexa, Google Assistant and Apple’s Siri rely on automatic speech recognition. This technology allows the assistants to answer questions, perform tasks and interact with other devices.

What role does artificial intelligence play in ASR technologies?

Artificial Intelligence helps improve the accuracy and overall functionality of ASR systems. In particular, the development of large language models has led to a significant improvement in processing natural language. A large language model can not only perform translations and create complex texts that are highly relevant, it can also recognise spoken language. ASR systems benefit greatly from advancements in this area. AI is also beneficial for the development of accent-specific language models.

- Get online faster with AI tools

- Fast-track growth with AI marketing

- Save time, maximise results

What are the strengths and weaknesses of automatic speech recognition?

Compared to traditional transcription, automatic speech recognition offers several advantages. A key strength of modern ASR processes is their high accuracy, stemming from the ability to train these systems with large datasets. This enables improved quality in subtitles or transcriptions, which can also be provided in real time.

Another major benefit is increased efficiency. Automatic speech recognition allows companies to scale, expand their service offerings faster and reach a larger customer base. ASR tools also make it easier for students and professionals to document audio content, for example, during a business meeting or university lecture.

While more accurate than ever before, ASR systems still cannot match human accuracy though. This is largely due to the many nuances in spoken language. Accents, dialects, tone variations and background noise remain challenging for these systems, with even the most powerful deep learning models unable to handle input that doesn’t match expected or typical patterns. Another concern is that ASR technologies often process personal data, raising issues regarding privacy and data security.